Prompt management and hardcoding represent two distinct ways to handle prompts in LLM applications. Hardcoding is simple and works for small projects, but it creates bottlenecks as applications grow. Prompt management systems, on the other hand, treat prompts as independent, versioned assets, allowing faster updates and better collaboration.

Key Takeaways:

- Hardcoding Pros: Quick to set up, no extra infrastructure, ideal for prototypes or MVPs.

- Hardcoding Cons: Slow updates, versioning chaos, excludes non-developers, and doesn't scale well.

- Prompt Management Pros: Instant updates, centralized control, enables collaboration, and supports large-scale applications.

- Prompt Management Cons: Requires setup (database, API, UI) and more infrastructure.

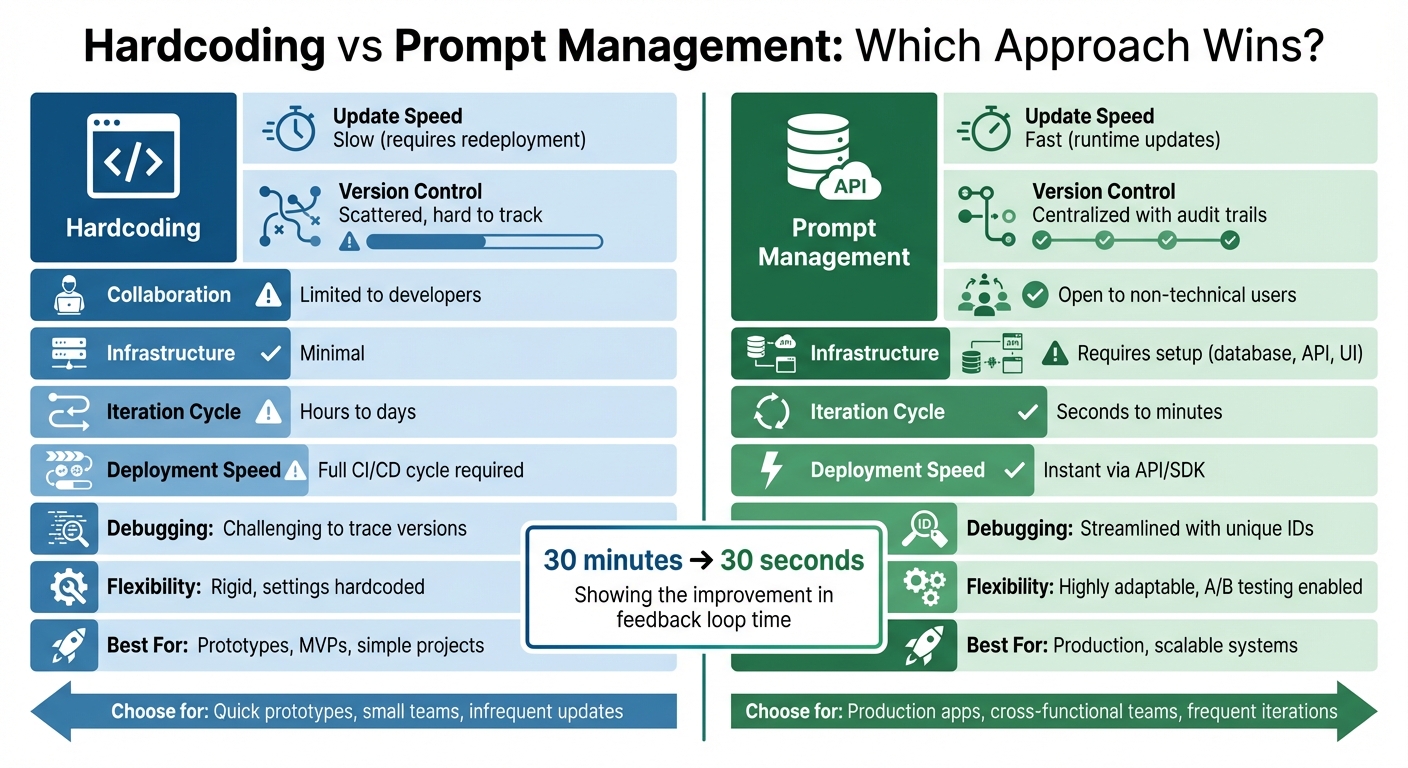

Quick Comparison:

| Feature | Hardcoding | Prompt Management |

|---|---|---|

| Update Speed | Slow (requires redeployment) | Fast (runtime updates) |

| Version Control | Scattered, hard to track | Centralized, with audit trails |

| Collaboration | Limited to developers | Open to non-technical users |

| Infrastructure | Minimal | Requires setup (database, API) |

| Best For | Prototypes, simple projects | Production, scalable systems |

Bottom Line: If you need frequent updates, collaboration, or scalability, prompt management is the better choice. Hardcoding works for early-stage projects but can become a burden as complexity grows.

Hardcoding vs Prompt Management: Feature Comparison Chart

Hardcoding Prompts: How It Works and Where It Falls Short

Why Developers Choose Hardcoding

Hardcoding prompts offers a quick and straightforward way to kickstart an AI project. By embedding the prompt text directly into the code, developers can bypass the need for additional infrastructure or external APIs. For those working on a minimum viable product or testing a proof of concept, this approach is convenient. It integrates seamlessly with familiar tools like an IDE and Git, keeping prompts alongside the rest of the project code. The draw is obvious: no upfront costs and immediate control. For small-scale projects, this simplicity often meets all the requirements. However, as the project grows, this once-effective method starts to show its cracks.

Problems That Emerge at Scale

While hardcoding may work well initially, it quickly becomes a bottleneck as applications expand and mature.

"It worked. Until it didn't."

A real-world example of this comes from Databox's engineering team. In January 2026, after a year of building LLM-powered agents, they faced the limitations of their hardcoded prompts. Scattered across Python files with embedded model configurations, the setup caused headaches. Even minor fixes, like correcting a typo, required full code deployments. This meant product managers had to endure lengthy waits for what should have been quick adjustments. To address the issue, the team adopted a new system using PostgreSQL as the centralized source of truth and LangSmith for editing. This allowed them to update prompts via webhooks, eliminating the need for restarts and reducing their feedback loop from 30 minutes to near-instant updates.

The root problem lies in how hardcoding ties the lifecycle of prompts to the lifecycle of the application code. Code changes typically follow a rigorous and infrequent testing schedule, whereas prompt updates often need to happen quickly and frequently. A simple 30-second tweak can end up triggering a 30-minute deployment cycle.

Hardcoding also leads to version control chaos. With prompts scattered across repositories, configuration files, and even Slack messages, tracking which version is live in production becomes nearly impossible. On top of that, this approach excludes key contributors like product managers, legal teams, and copywriters. These domain experts often have the expertise to refine a prompt’s tone or intent but are unable to make changes without involving developers.

"The moment your application moves beyond a single LLM call to handle multiple tasks, agents, or workflows, unstructured prompting becomes technical debt that compounds with every feature you add."

sbb-itb-b6d32c9

Prompt Management Systems: What They Offer

Core Advantages of Prompt Management

Instead of embedding prompts directly into code, prompt management systems store them in a centralized registry. Applications can then retrieve these prompts at runtime via an API or SDK. This separation is a game-changer - it allows application code and prompts to evolve independently. While application updates might follow a slower CI/CD cycle, prompts often need updates as frequently as daily or even hourly.

One of the standout benefits? Prompt updates are instant. Changes can be pushed live in seconds, cutting feedback loops from 30 minutes to just 30 seconds. Advanced systems even bundle model parameters - like temperature, max tokens, and provider - together with the prompt text, treating them as a cohesive unit.

"Prompts are not code; they are configuration and content." – Alex Ostrovskyy, MLOps Architect

These systems eliminate the downsides of hardcoding, offering scalability, flexibility, and improved collaboration.

Version control is another key feature. Every prompt change creates a new, immutable version with unique identifiers, side-by-side diffs, and detailed audit trails. Semantic tags like prod, staging, or dev can direct applications to specific prompt versions. Promoting a prompt to production is as simple as reassigning a tag in the UI. If something goes wrong, rolling back to a previous version is usually just a click away.

For added reliability, some systems use fallback chains. For example, they might first retrieve prompts from a local database, then an external API, and finally a hardcoded default if all else fails. This ensures prompts remain accessible even if a primary source is unavailable. A great example comes from Databox’s engineering team, which, in January 2026, built a system allowing product managers to adjust wording and model settings through a UI - without causing any downtime.

When Prompt Management Makes Sense

Prompt management systems shine in environments where collaboration and quick iteration are crucial. If product managers are stuck waiting 30 minutes for a typo fix or domain experts need to file tickets for every minor adjustment, it’s a clear sign that hardcoding is holding the team back. With a web-based interface, non-technical stakeholders like product managers, legal teams, and domain experts can edit and test prompts independently - no Git access required.

These systems also excel in adapting to real-world changes. For instance, in October 2025, Nearform transitioned to a shared prompt registry. This shift allowed for independent prompt management, faster iterations, and the reuse of common prompt snippets.

Applications handling multiple tasks, agents, or workflows benefit even more. When prompts are scattered across source files, it can lead to technical debt and inefficiencies. A centralized system provides a single source of truth, complete with testing tools, systematic evaluations to catch quality issues, and observability features. These tools trace every production output back to the exact prompt version and model configuration, ensuring transparency and control.

Infrastructure and Operational Differences

Simplicity vs. Flexibility

Hardcoding prompts directly into your code requires no extra setup, allowing for quick deployment. However, this approach ties even the smallest updates to the deployment cycle, creating a bottleneck. Alex Ostrovskyy, an MLOps Architect, highlights this issue succinctly:

"Leaving prompts hardcoded in your application is the modern equivalent of magic numbers in source code".

On the other hand, prompt management systems demand an upfront investment in infrastructure. This includes setting up a backend API, a versioned database, and a management UI for easier updates by non-technical users. The payoff? You gain the ability to tweak prompts in seconds, bypassing the need for a full redeployment. While hardcoding prioritizes simplicity, prompt management systems lean toward operational flexibility - each with its own set of trade-offs.

What Each Approach Requires

With hardcoding, prompts are stored in the code repository and deployed through the usual CI/CD pipeline. This method works for small-scale projects but struggles as complexity grows.

Prompt management systems, in contrast, rely on a more sophisticated setup. This usually involves three key components:

- A Management UI for editing prompts.

- A Backend API to serve as the central source of truth.

- A lightweight SDK for runtime integration.

These systems often require additional infrastructure, such as PostgreSQL for database management and dedicated API endpoints. To ensure reliability, advanced setups include a fallback mechanism. For instance, a typical chain might look like this: local database → external API → hardcoded default.

A practical example comes from Databox's engineering team. They transitioned from hardcoded Python prompts to a PostgreSQL-based system, utilizing a three-layer fallback chain with 1-second timeouts. This ensures agents never wait more than 3 seconds for a response.

The benefits become clear at scale. Teams using structured prompt management report cutting debugging time for LLM-related issues in half and iterating on prompts three times faster compared to hardcoded methods.

Side-by-Side Comparison: Hardcoding vs. Prompt Management

Key Comparison Factors

Here's a breakdown of how hardcoding and prompt management systems stack up against each other across several attributes:

| Attribute | Hardcoding Prompts | Prompt Management System |

|---|---|---|

| Deployment Speed | Slow; requires a full CI/CD cycle, build, and redeployment | Instant; runtime updates via API/SDK without redeployment |

| Iteration Cycle | Hours to days; dependent on engineering schedules | Seconds to minutes; works independently of code releases |

| Version Control | Git-based; often scattered across .env or .txt files |

Centralized registry; includes immutable versions and side-by-side comparisons |

| Collaboration | Limited to technical users; requires PRs and developer access | Open to cross-functional teams; UI-driven for PMs and domain experts |

| Debugging Ease | Challenging; difficult to trace outputs to specific versions | Streamlined; unique IDs and audit trails link outputs to versions |

| Observability | Manual; relies on custom logging and anecdotal feedback | Built-in; supports LLM-as-a-Judge and automated evaluations |

| Infrastructure | Simple; requires no additional services | More complex; includes registry, database, API, and SDK |

| Flexibility | Rigid; model settings are often hardcoded | Highly adaptable; enables A/B testing, canary rollouts, and hot-swapping |

The biggest contrast lies in iteration speed. Hardcoding turns what could be a 30-second change into a process that takes 30 minutes or more. In contrast, prompt management systems eliminate this bottleneck by separating prompt content from the application code.

Collaboration is another stark difference. Hardcoding forces non-technical stakeholders to depend on developers for updates, creating delays. Prompt management systems empower these stakeholders with a user-friendly interface, allowing them to make changes directly without technical intervention.

When it comes to infrastructure, hardcoding is simpler but less forgiving. Rolling back a problematic prompt requires reverting code and redeploying the entire application. On the other hand, prompt management systems let you revert to previous versions instantly with no need for redeployment. These distinctions make it clear which approach aligns better with different workflows and team dynamics.

Prompt Management 101 - Full Guide for AI Engineers

How to Choose the Right Approach

Selecting the right prompt strategy is key to balancing speed and control when working with large language models (LLMs). Your choice will depend on factors like your application's development stage, the makeup of your team, and specific operational needs.

When Hardcoding Works

Hardcoding is a practical option for prototypes, MVPs (minimum viable products), or simple applications where prompts don’t change often, and the team is made up of developers. If you're creating a one-off proof of concept with a single LLM call and have a tight budget for tools, hardcoding is a quick and straightforward solution with minimal setup.

For early-stage experiments, where you're still figuring out product-market fit, hardcoding allows you to move fast without adding unnecessary complexity. However, this approach can quickly become a roadblock if you need to update prompts multiple times a day or involve non-technical stakeholders. Alex Ostrovskyy, an MLOps Architect, sums it up well:

"Leaving prompts hardcoded in your application is the modern equivalent of magic numbers in source code. It's a quick way to get started, but it creates a brittle, unmanageable system that cripples your ability to iterate."

Once your project grows beyond a simple prototype, the need for faster, more collaborative prompt updates will push you toward a more flexible approach.

When Prompt Management Becomes Necessary

Prompt management is a must for production-level applications where reliability, quick iterations, and collaboration are non-negotiable. If your team frequently updates prompts based on model performance or user feedback, a management system eliminates the slow deployment cycles that come with hardcoding - cutting what could take 30 minutes down to just seconds.

The need for prompt management often arises when non-developers, like product managers, domain experts, or legal teams, need to view, edit, or approve prompt content. A management system provides a user-friendly interface, so they can make changes without diving into the code.

Using a database-first management system with features like semantic tagging and fallback chains empowers non-technical team members to independently adjust prompts and model settings while keeping the system stable. This approach is ideal when safety measures like instant rollbacks, staged deployments, A/B testing, or regression testing are critical. These tools are especially valuable for business-critical workflows, where even small prompt changes can affect thousands of users. Additionally, features like audit trails and role-based access control ensure compliance with enterprise-level requirements.

Conclusion

The decision between hardcoding and using a prompt management system depends on your team's current needs and how those needs are likely to evolve. Hardcoding works well for quick prototypes or straightforward applications that don’t require frequent updates. However, as your application grows and more people need to modify prompts, hardcoding can slow things down and create deployment bottlenecks.

On the other hand, prompt management systems shine when speed, collaboration, and reliability become priorities. These systems decouple prompt updates from code deployments, allowing non-developers to make changes, roll back updates instantly, and maintain version control without relying on engineers. The trade-off is added complexity - managing databases, APIs, and fallback mechanisms becomes essential to keep everything running smoothly.

The best choice depends on how often your prompts need updates. If prompt content evolves faster than the application code, or if team members like product managers or legal experts need to adjust tone or compliance without waiting for developers, a management system eliminates that friction. For early-stage projects, hardcoding might be sufficient, but as delays, versioning issues, or non-developer update requests increase, it’s time to consider transitioning.

Ultimately, your approach should match both your current stage and future goals. Hardcoded prompts can work for a scrappy MVP, but a production system supporting a large user base benefits from the structure, collaboration tools, and audit capabilities that a management system provides.

FAQs

When should I switch from hardcoded prompts to prompt management?

When your project involves growing complexity, frequent updates, or collaboration between team members, it’s time to consider prompt management. Hardcoding prompts into your application can make even small adjustments a hassle, as each change requires redeploying code. This slows down your ability to adapt quickly.

By using a prompt management system, you can treat prompts as version-controlled assets. This allows for faster iteration, easier testing, and smoother collaboration. It also ensures consistency across your application, makes it easier for non-technical contributors to participate, and enables you to update prompts without touching the underlying code. This flexibility becomes especially important as models and requirements continue to evolve.

How are prompt versions safely promoted and rolled back?

A prompt management system helps manage prompt versions safely by offering structured version control and tracking. These tools organize and store prompts, allowing teams to easily compare, update, or revert to previous versions when needed. They enable teams to review changes, test updates in controlled settings, and roll them out gradually, reducing potential risks. By maintaining a clear history of changes, these systems eliminate the challenges of hardcoded prompts and support stable production workflows.

What happens if the prompt registry or API is down?

When the prompt registry or API experiences downtime, handling prompts can turn into a real challenge - especially in a production environment. Without a centralized system, tasks like updating, versioning, or retrieving prompts can face delays, leading to a higher chance of errors. A strong prompt management system helps solve this issue by separating prompts from the code itself. This setup allows for cached or versioned prompts to keep things running smoothly, even during temporary outages.