Best Prompt Management Tools for AI Teams in 2026

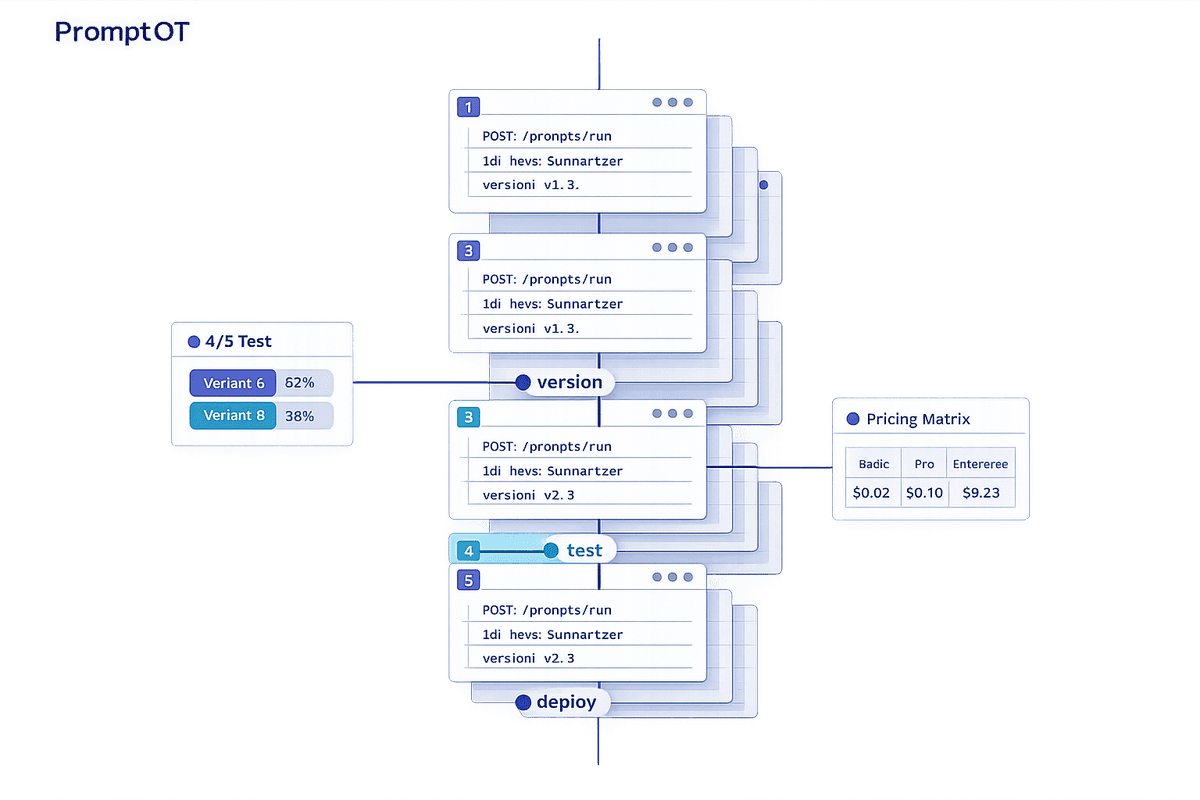

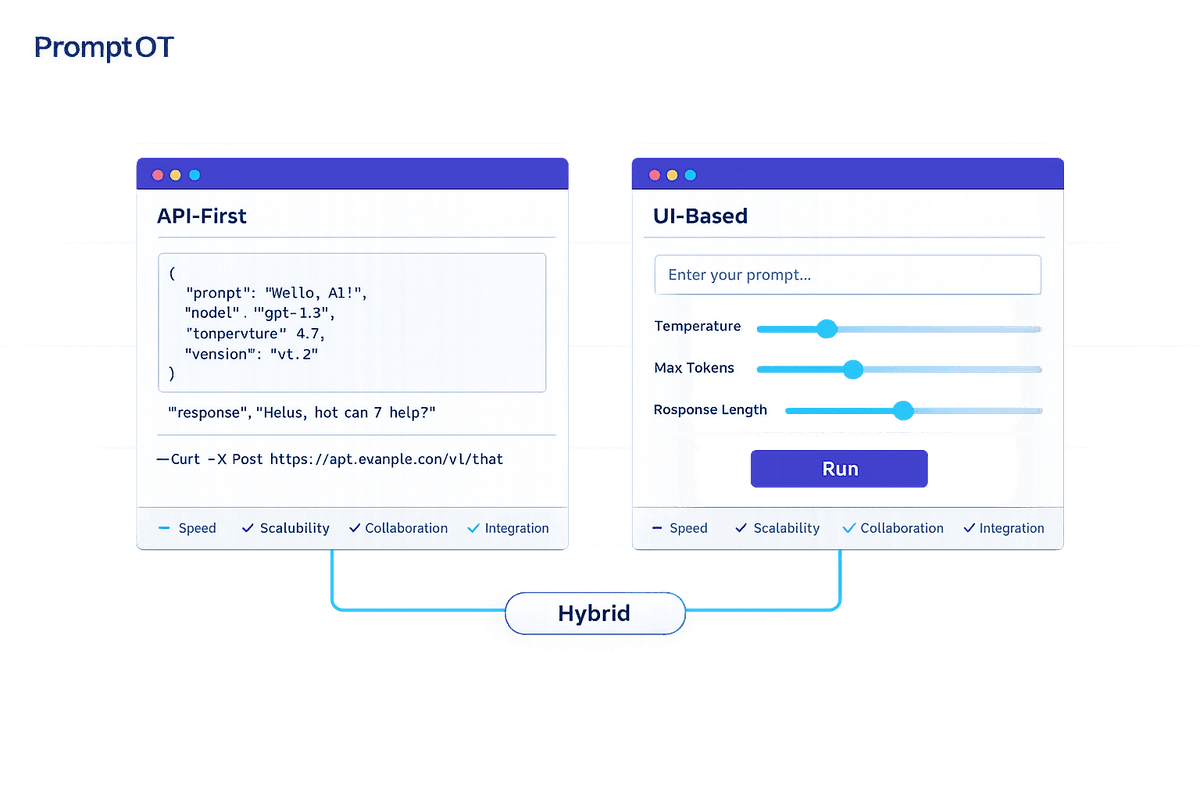

Compare seven prompt management platforms—features, pricing, and use cases to help AI teams manage prompts with versioning, testing, and deployment.

Read moreBrowse all articles in the mlops category.

Compare seven prompt management platforms—features, pricing, and use cases to help AI teams manage prompts with versioning, testing, and deployment.

Read more

How to build modular, versioned prompts (RTCCO) for reliable AI: centralize prompts, enforce output formats, add guardrails, testing, and production workflows.

Read more

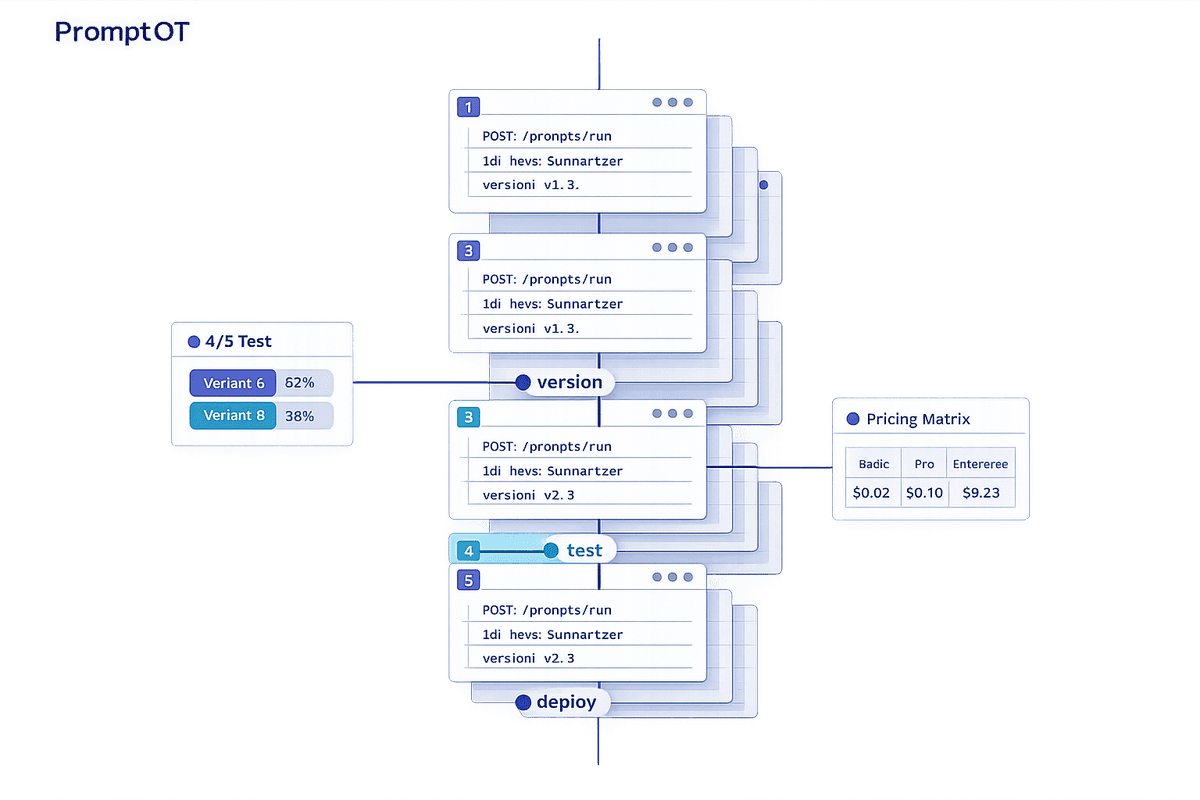

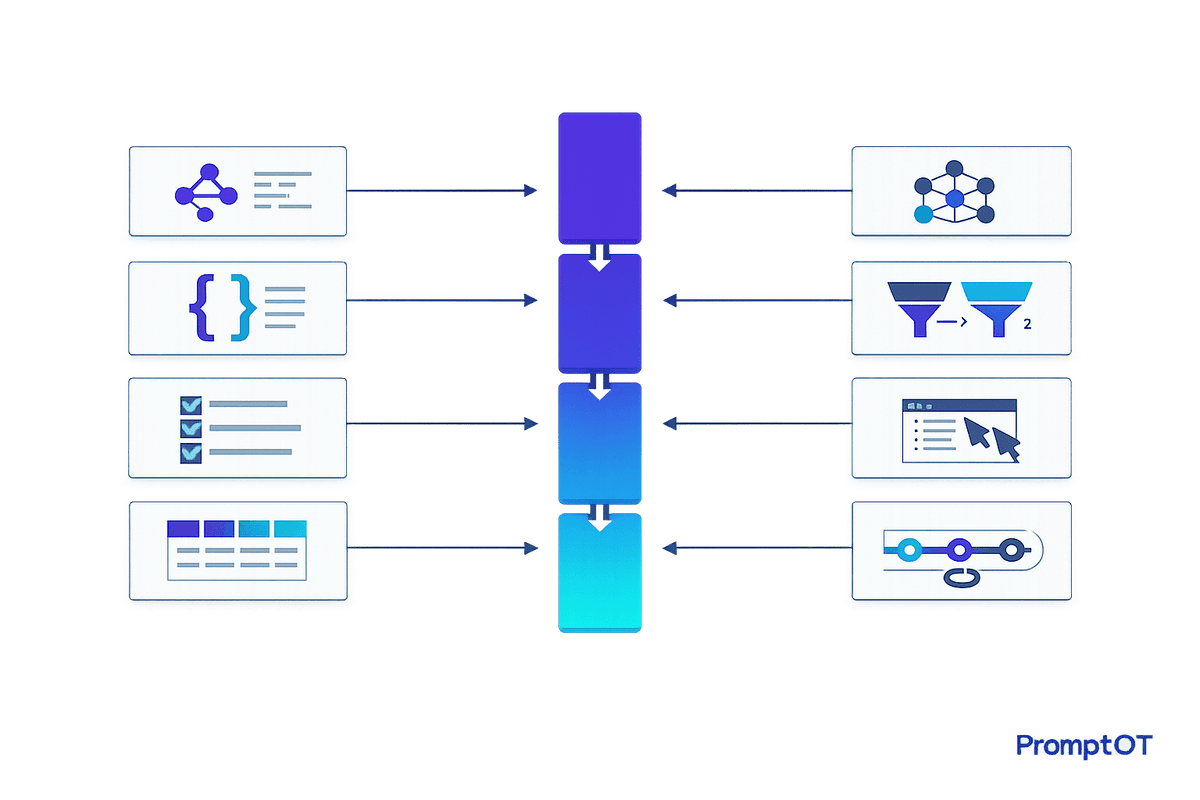

A practical guide to scaling enterprise prompt management: modular prompts, versioning with rollback, RBAC, env-scoped keys, guardrails, webhooks, interpolation, and provider-agnostic delivery.

Read more

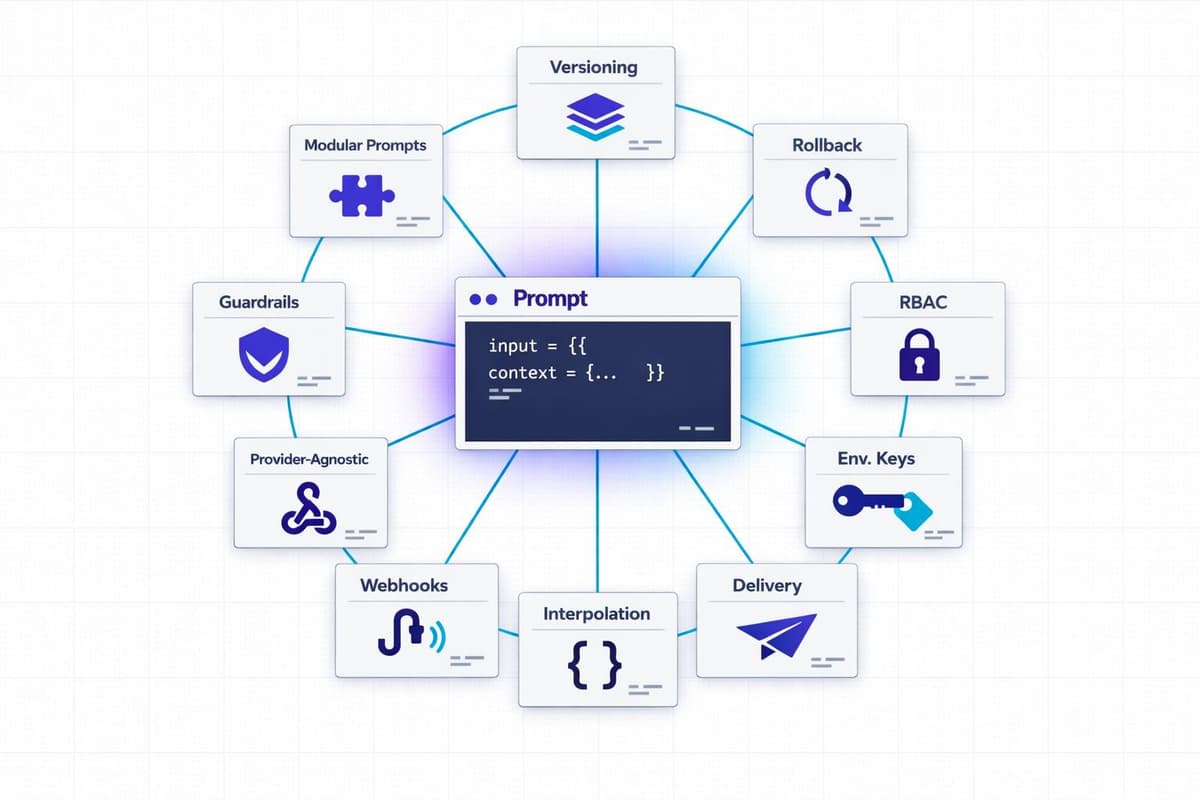

Compare API-first and UI-based prompt delivery—trade-offs in speed, scalability, collaboration, integration, and when to use a hybrid approach.

Read more

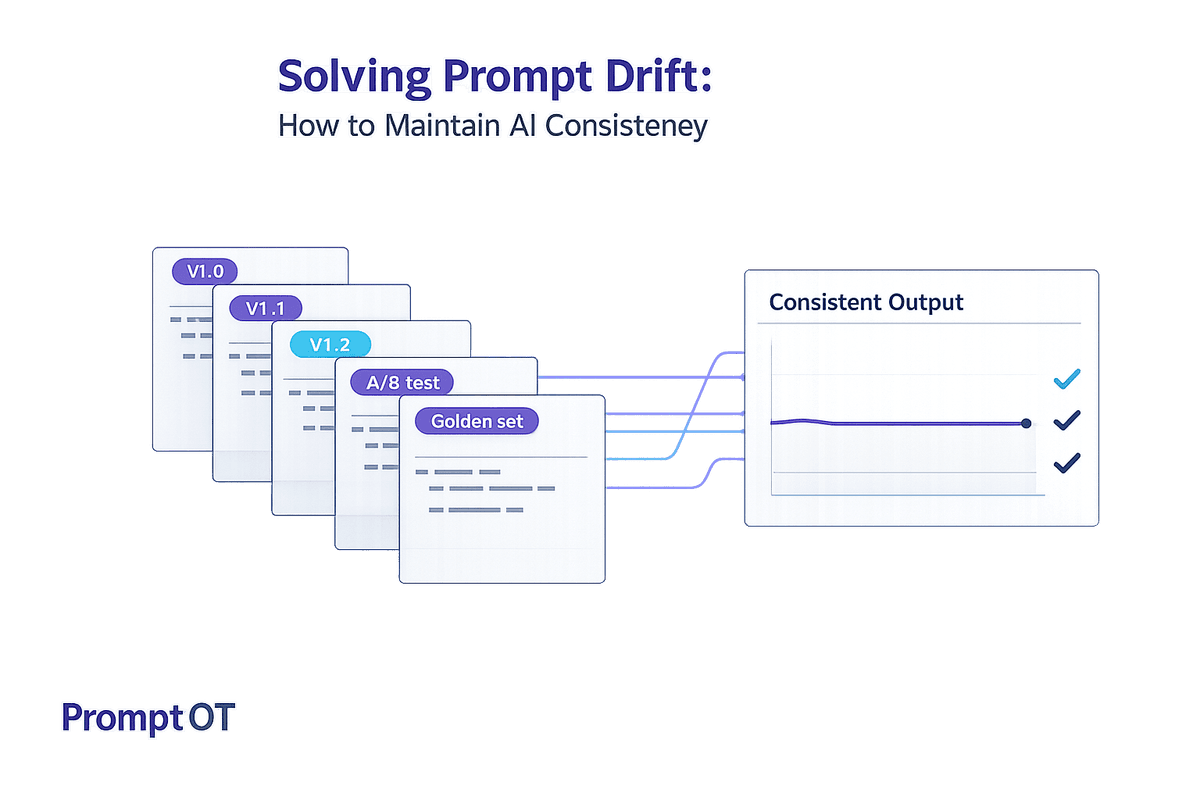

Learn how to detect, prevent, and monitor prompt drift with versioning, golden datasets, A/B tests, and centralized prompt management for reliable LLM outputs.

Read more

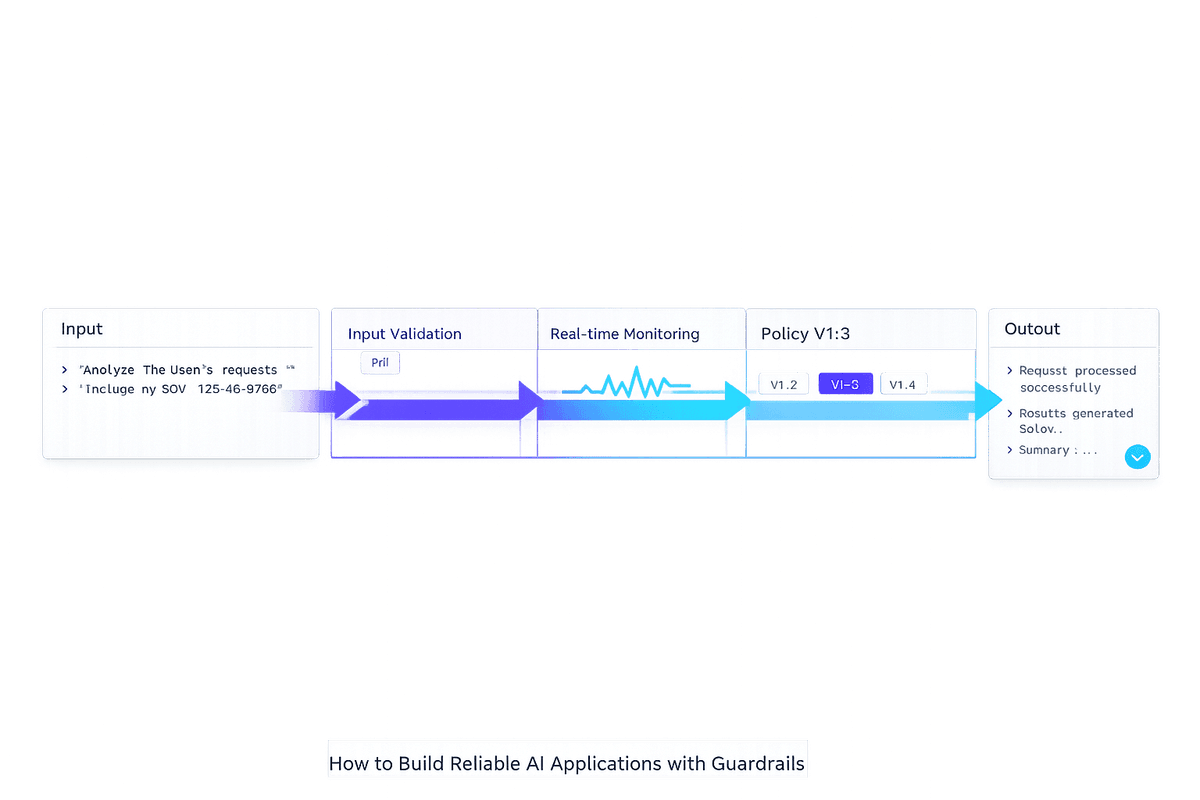

Practical guide to building AI guardrails with input/output validation, real-time monitoring, and versioned policies to prevent prompt injections, PII leaks, and hallucinations.

Read more

Turn prompt chaos into repeatable workflows with shared repos, templates, automated tests, RACI roles, LLM agents, token routing, and real-time collaboration tools.

Read more

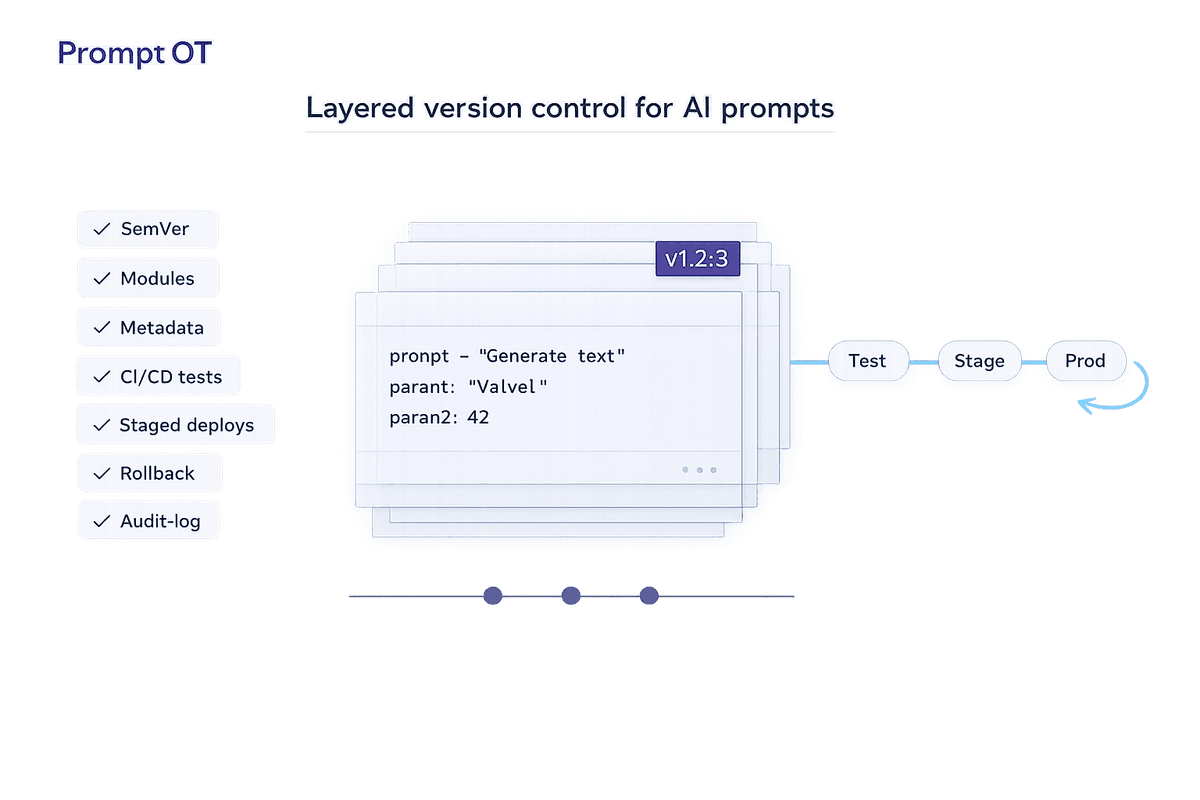

Manage prompts like code using SemVer, modular blocks, metadata, CI/CD testing, staged deployments, and instant rollbacks for reliable LLM outputs.

Read more

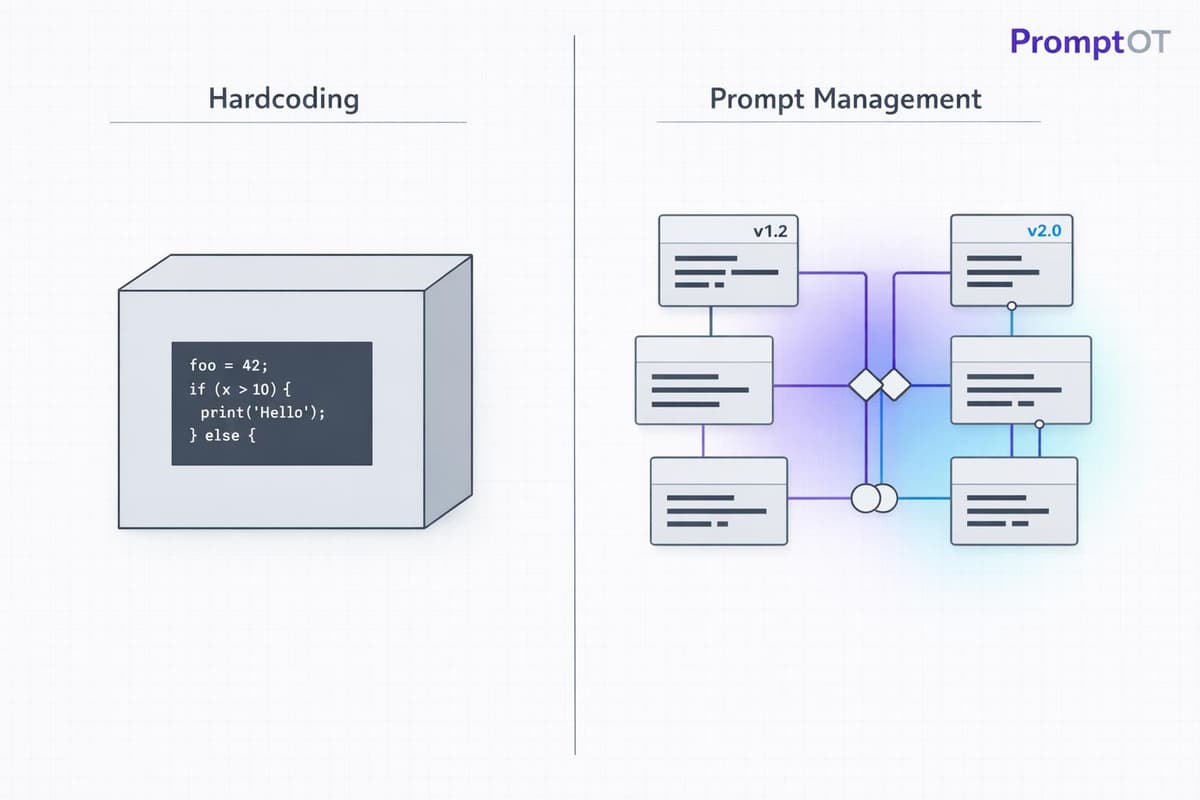

Compare hardcoding and prompt management for LLMs — trade-offs in speed, collaboration, versioning, and infrastructure to choose the right approach.

Read more