How to Design Efficient Prompts for Lower LLM Costs

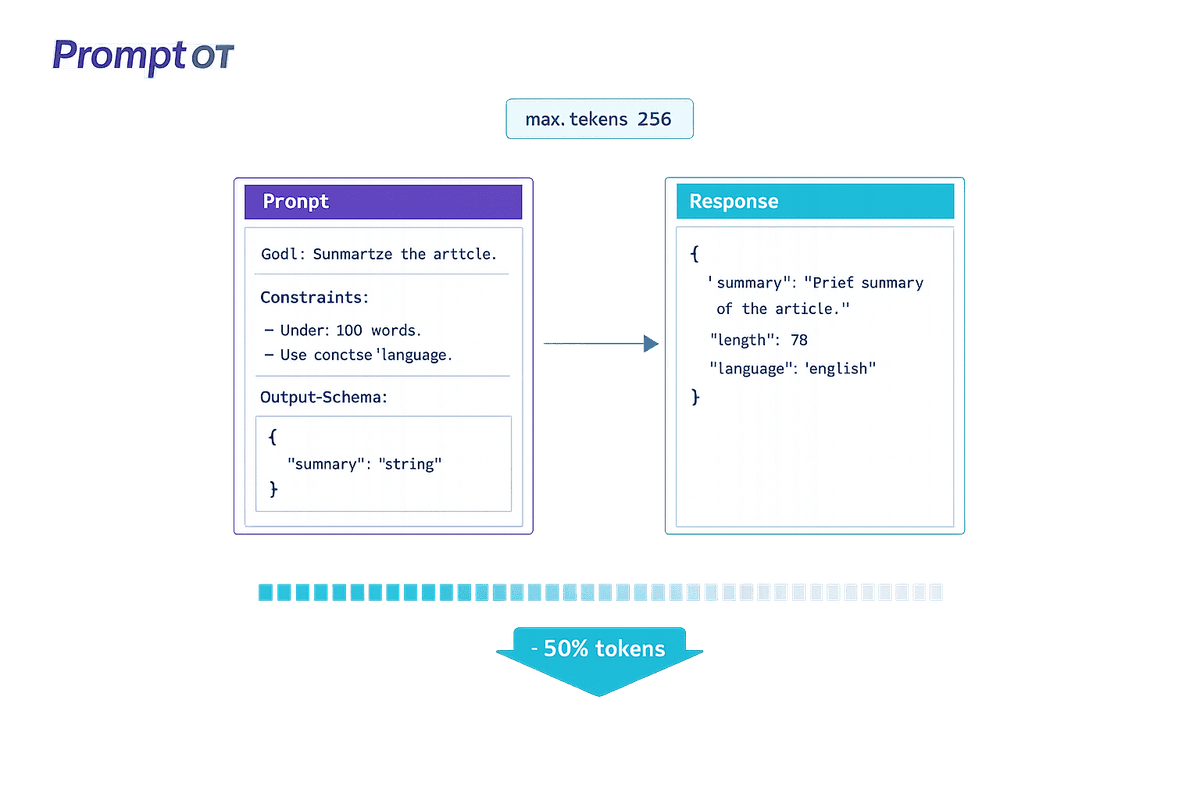

Cut LLM API costs by 40–60% with concise, structured prompts—use delimiters, JSON/YAML, split tasks, set max_tokens, and iterate tests to reduce retries.

Read moreAll articles tagged with “prompt engineering”.

Cut LLM API costs by 40–60% with concise, structured prompts—use delimiters, JSON/YAML, split tasks, set max_tokens, and iterate tests to reduce retries.

Read more

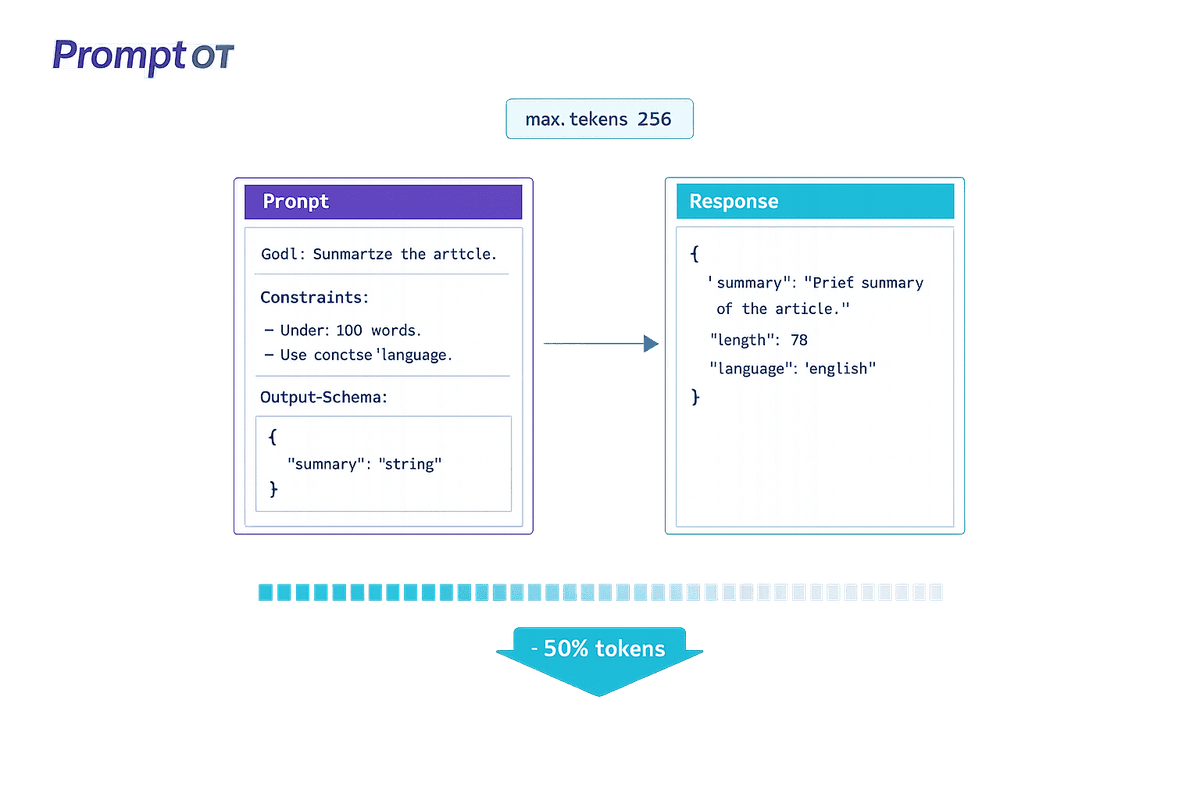

Systematic feedback loops—logging, evaluation, and human review—refine prompts to reduce errors, cut review costs, and accelerate quality improvements.

Read more

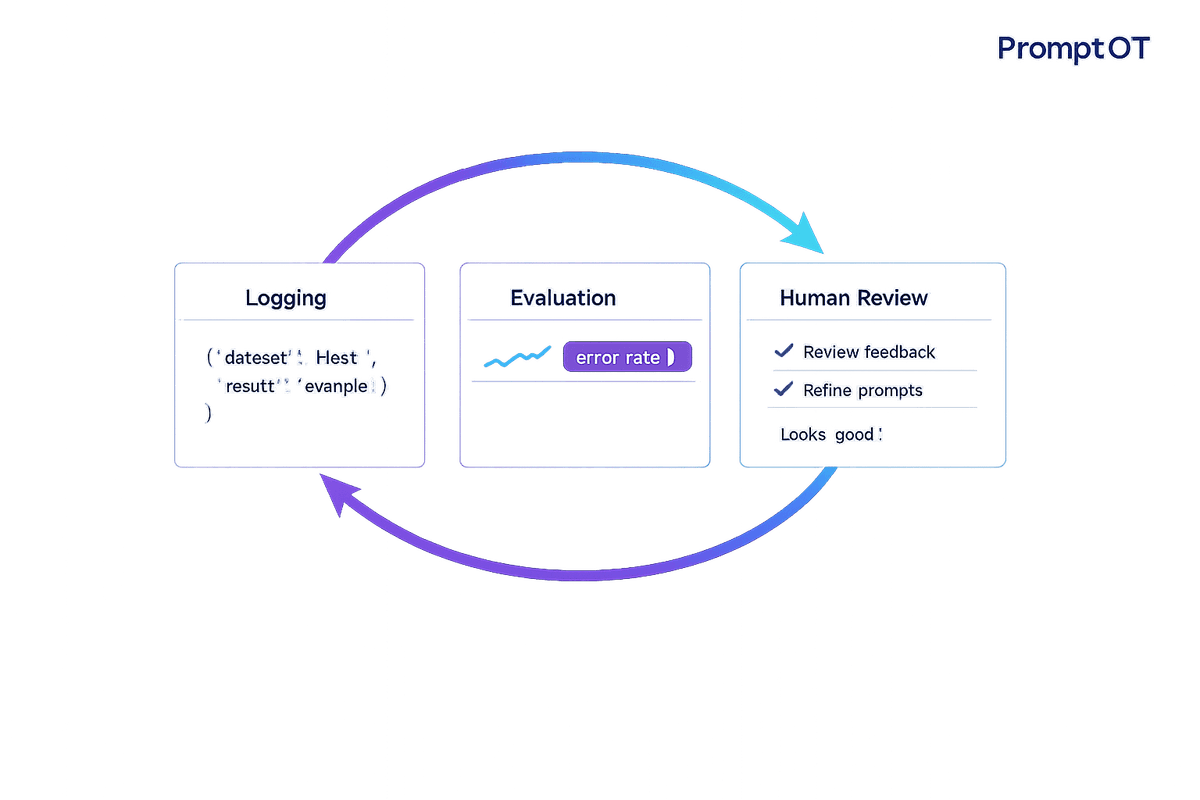

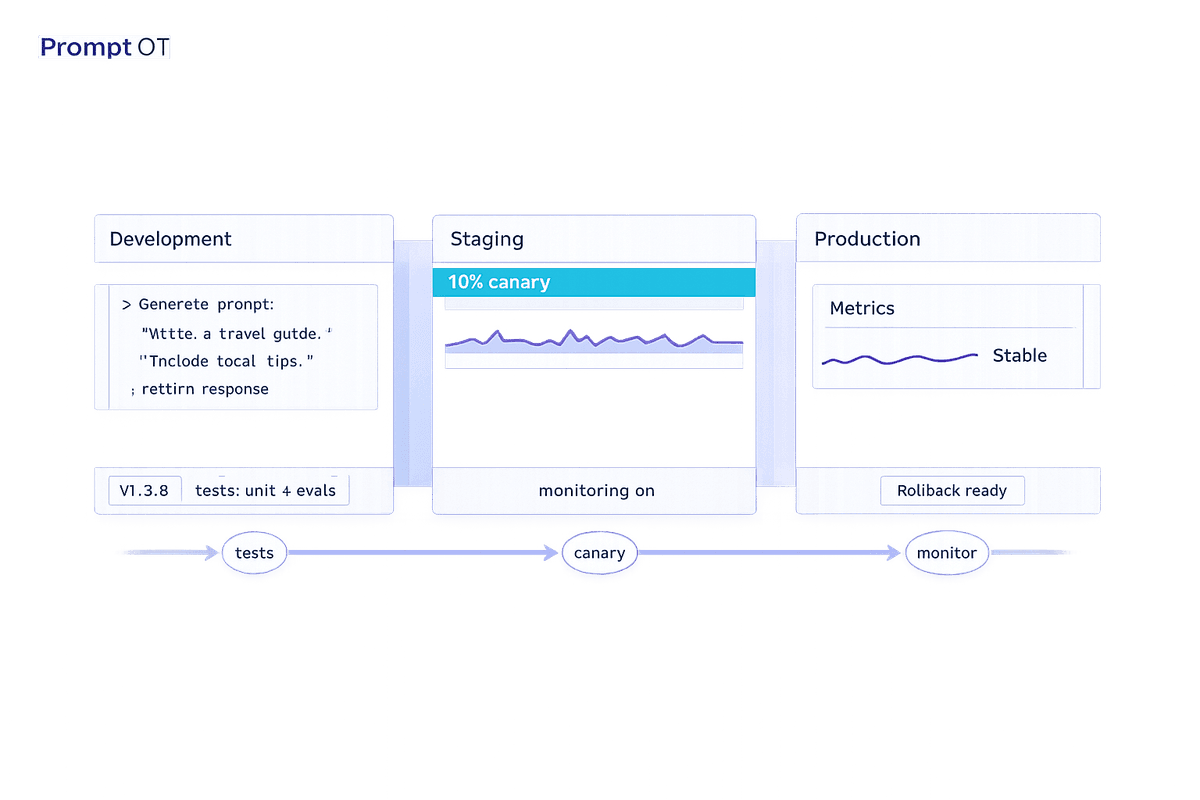

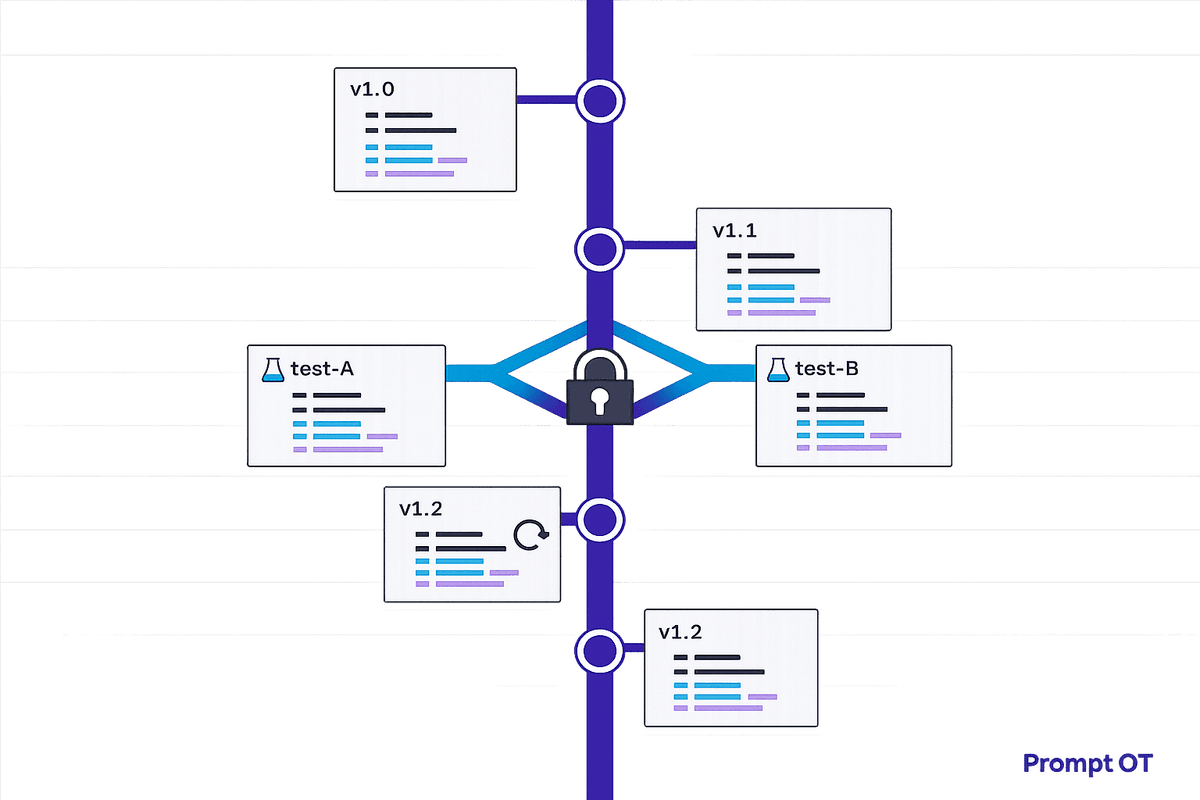

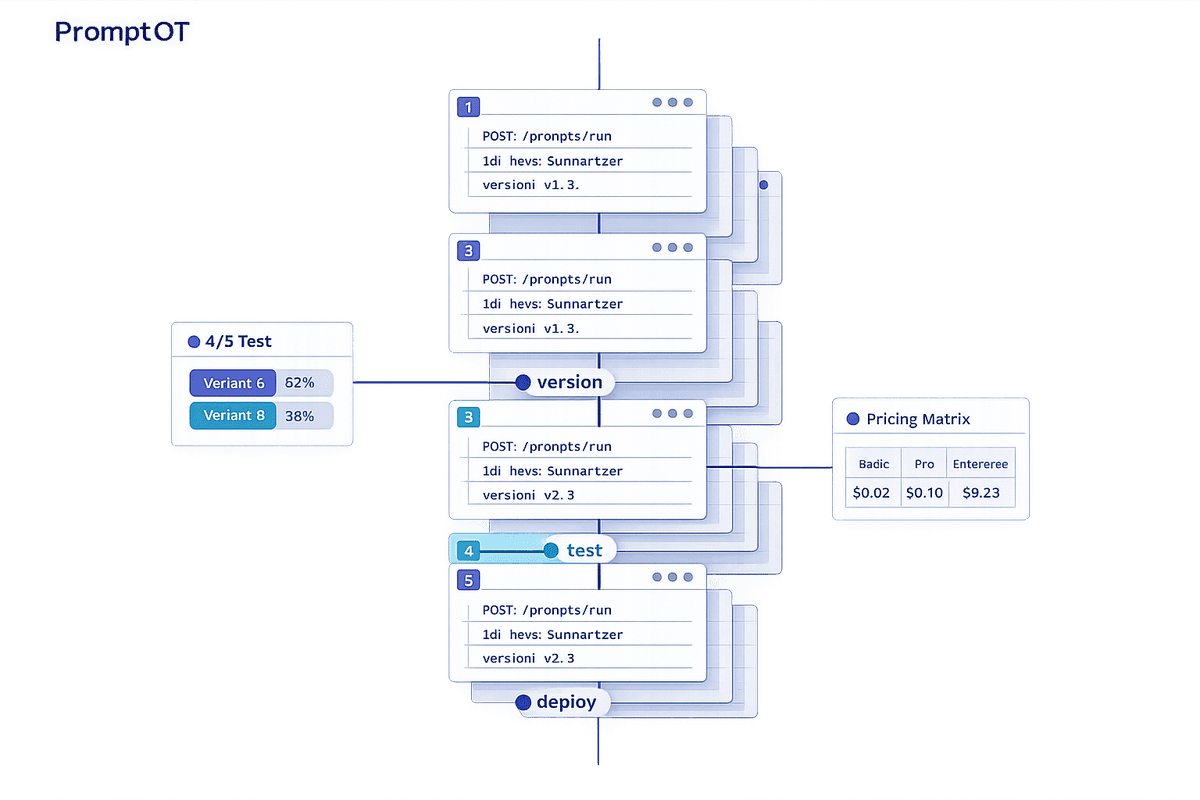

Treat prompts like code: apply semantic versioning, env-specific deployments, automated tests, canary rollouts, monitoring, and fast rollbacks to keep LLMs reliable.

Read more

Track every prompt change, prevent silent overwrites, enable safe testing, and rollback instantly with centralized prompt versioning for stable AI production.

Read more

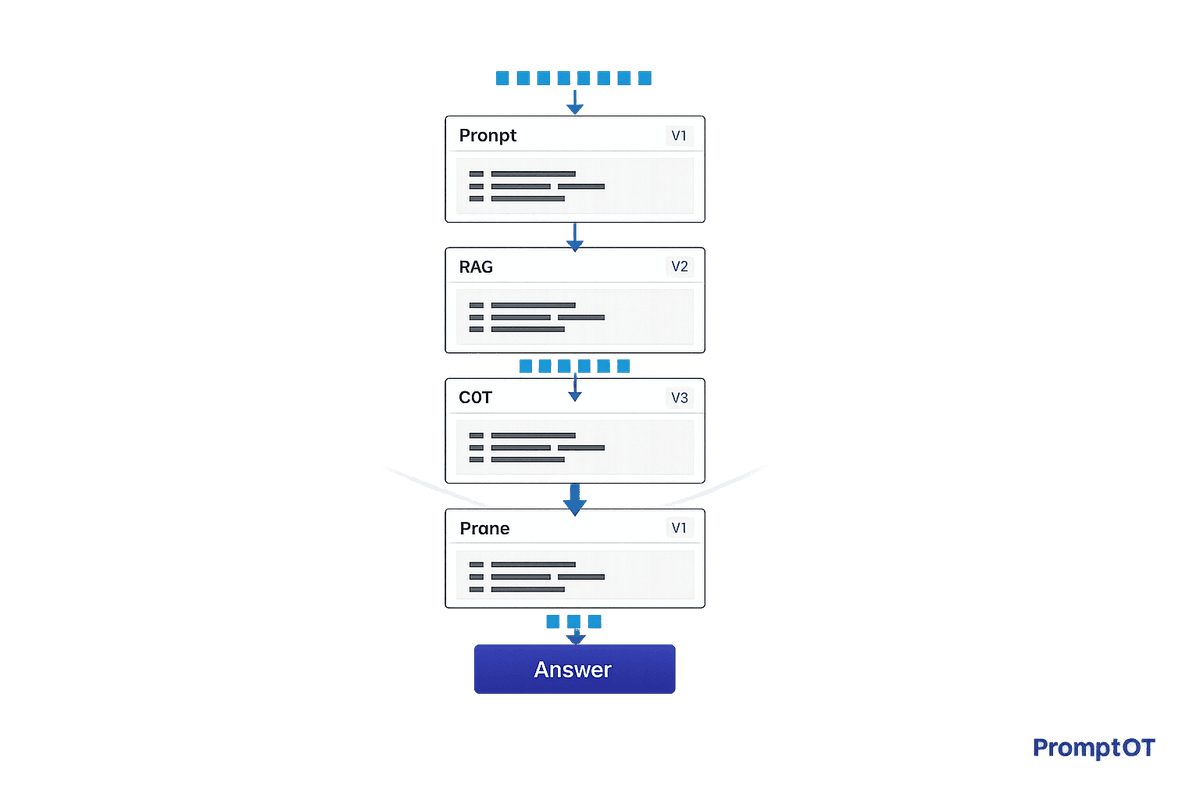

Structure prompts, RAG, CoT scaffolding, and pruning to cut hallucinations, lower token costs, and scale reliable AI team workflows.

Read more

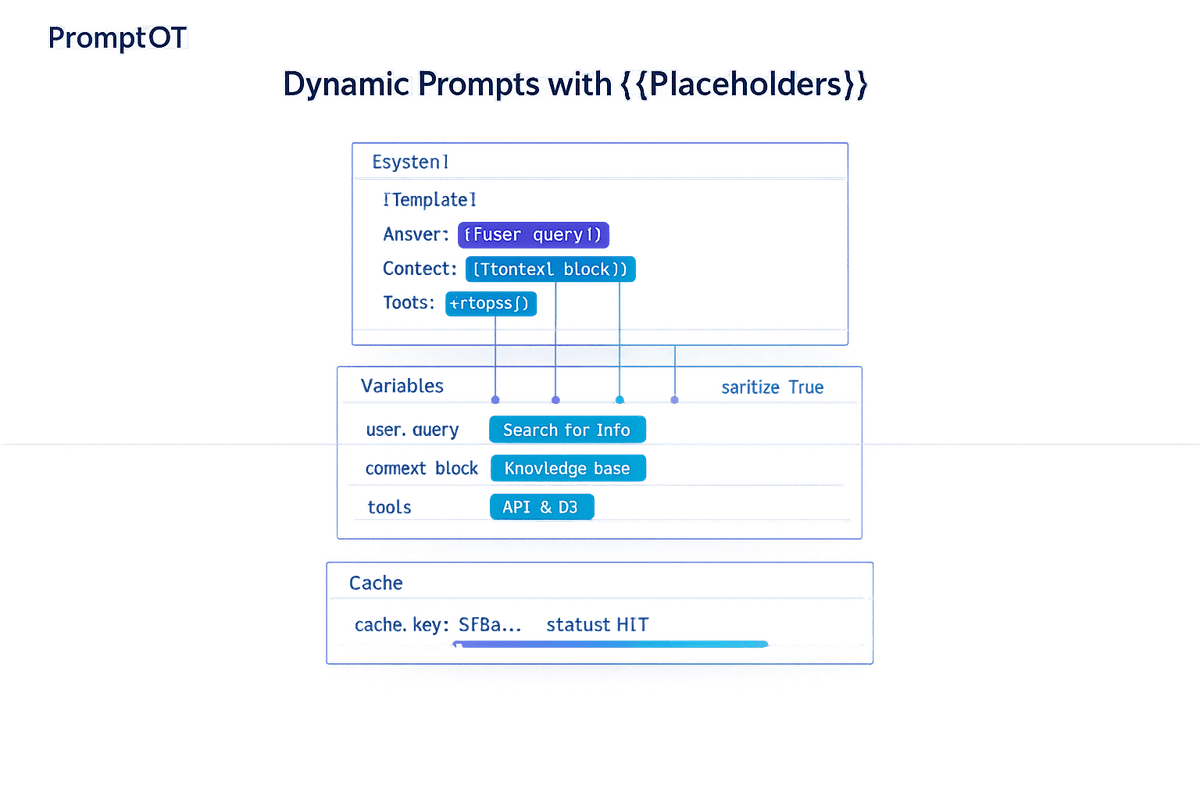

Build reusable LLM prompts using {{placeholders}}—learn runtime variable passing, block-based templates, sanitization, and caching for scalable chatbots and RAG.

Read more

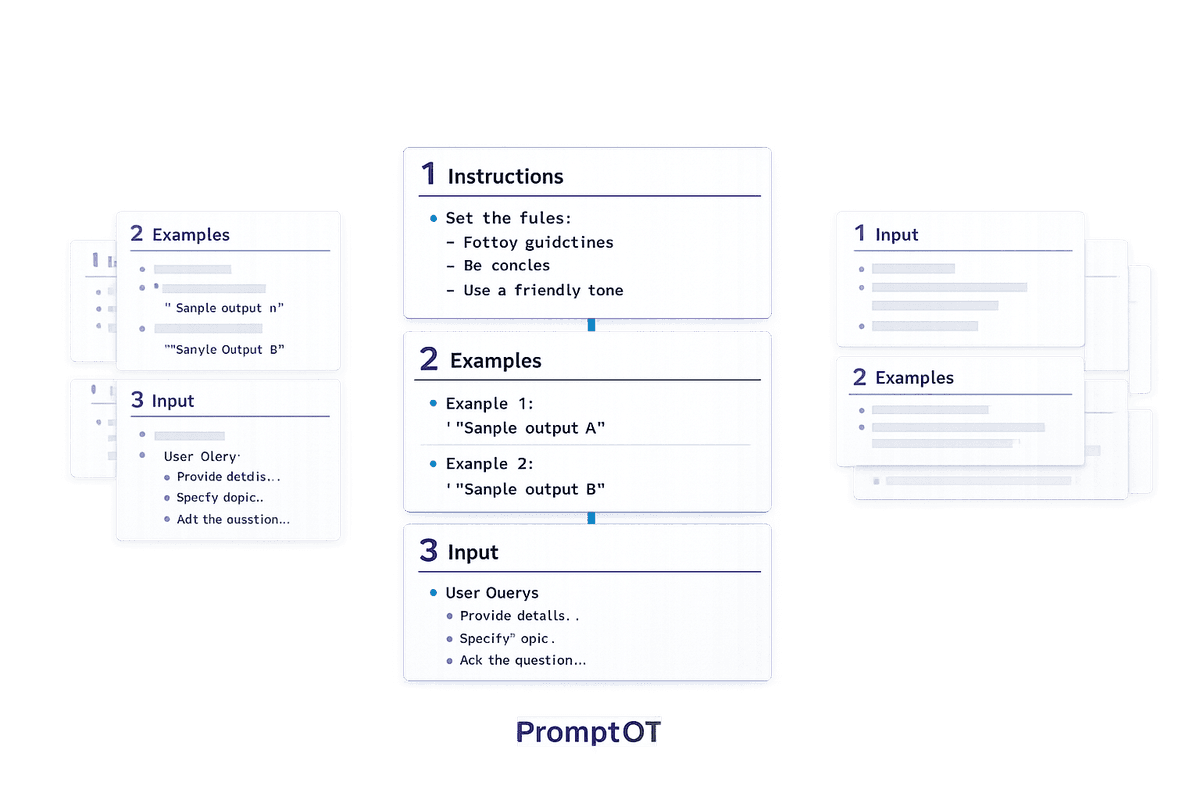

The sequence of instructions, examples, and inputs can drastically change LLM outputs—test and optimize block order to improve reasoning and multimodal accuracy.

Read more

Compare seven prompt management platforms—features, pricing, and use cases to help AI teams manage prompts with versioning, testing, and deployment.

Read more

How to build modular, versioned prompts (RTCCO) for reliable AI: centralize prompts, enforce output formats, add guardrails, testing, and production workflows.

Read more

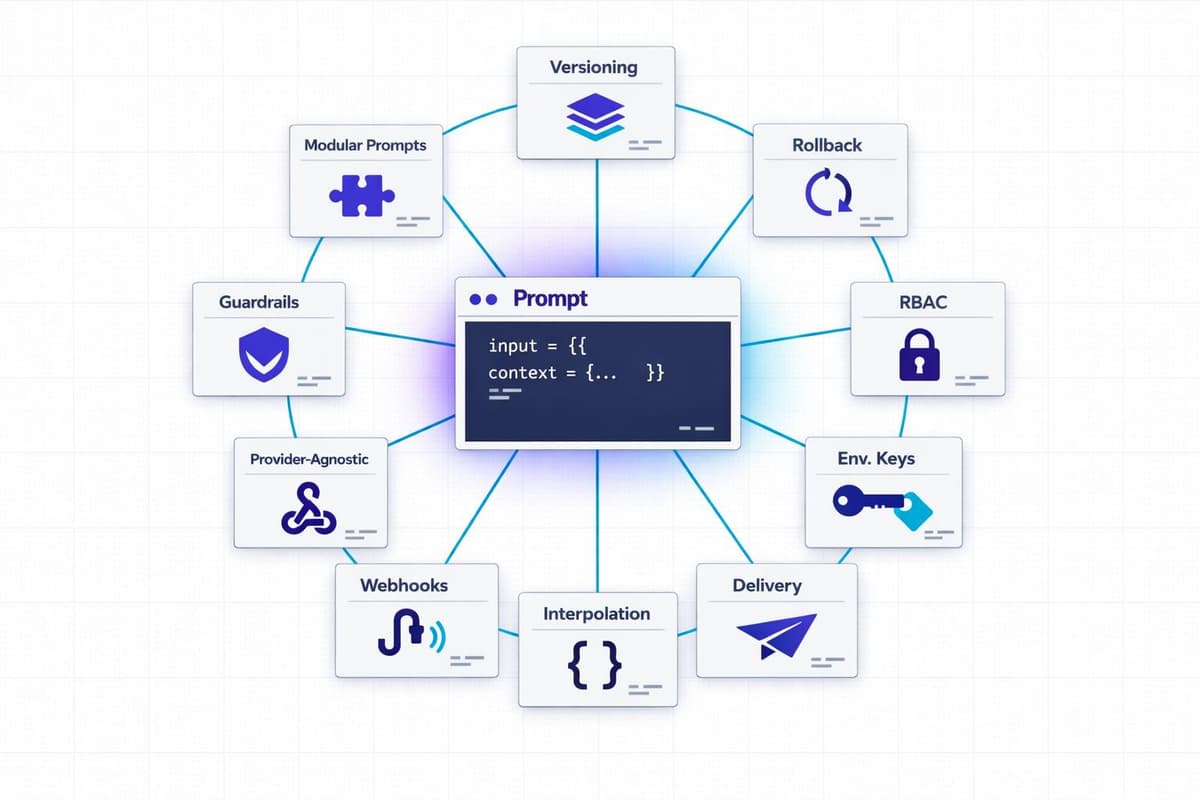

A practical guide to scaling enterprise prompt management: modular prompts, versioning with rollback, RBAC, env-scoped keys, guardrails, webhooks, interpolation, and provider-agnostic delivery.

Read more

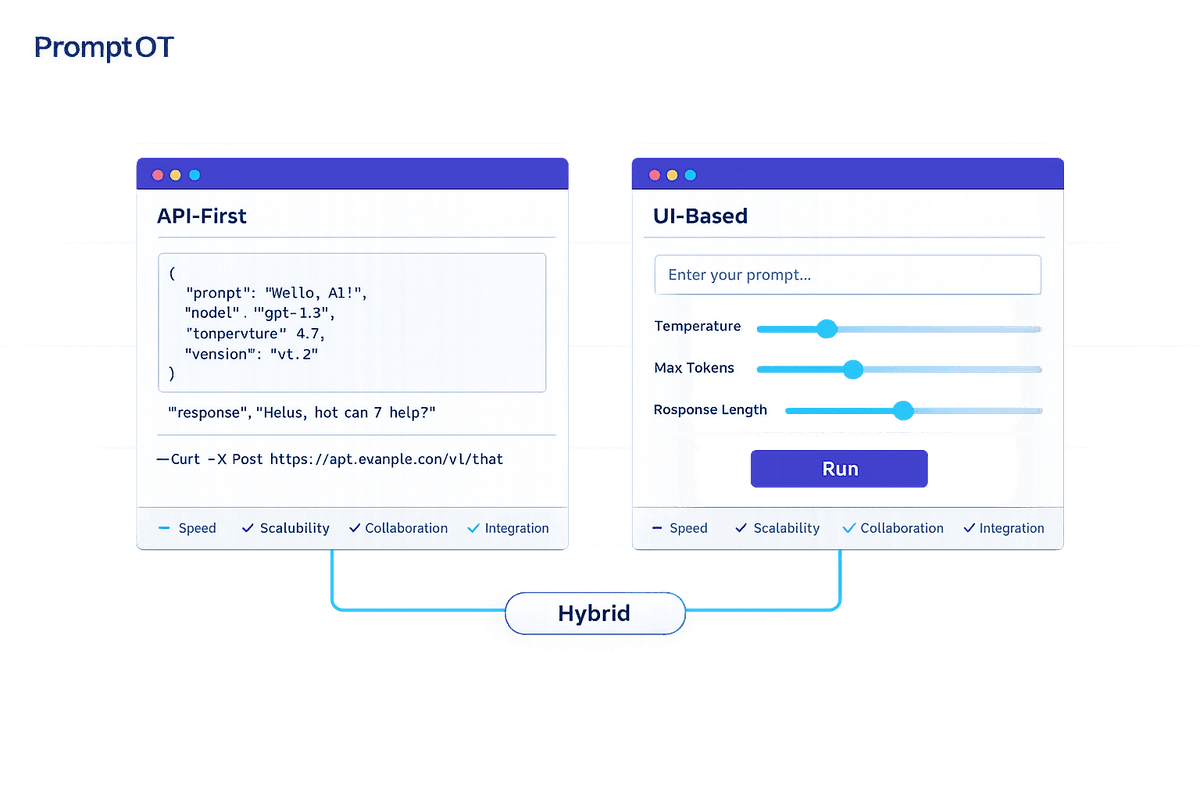

Compare API-first and UI-based prompt delivery—trade-offs in speed, scalability, collaboration, integration, and when to use a hybrid approach.

Read more

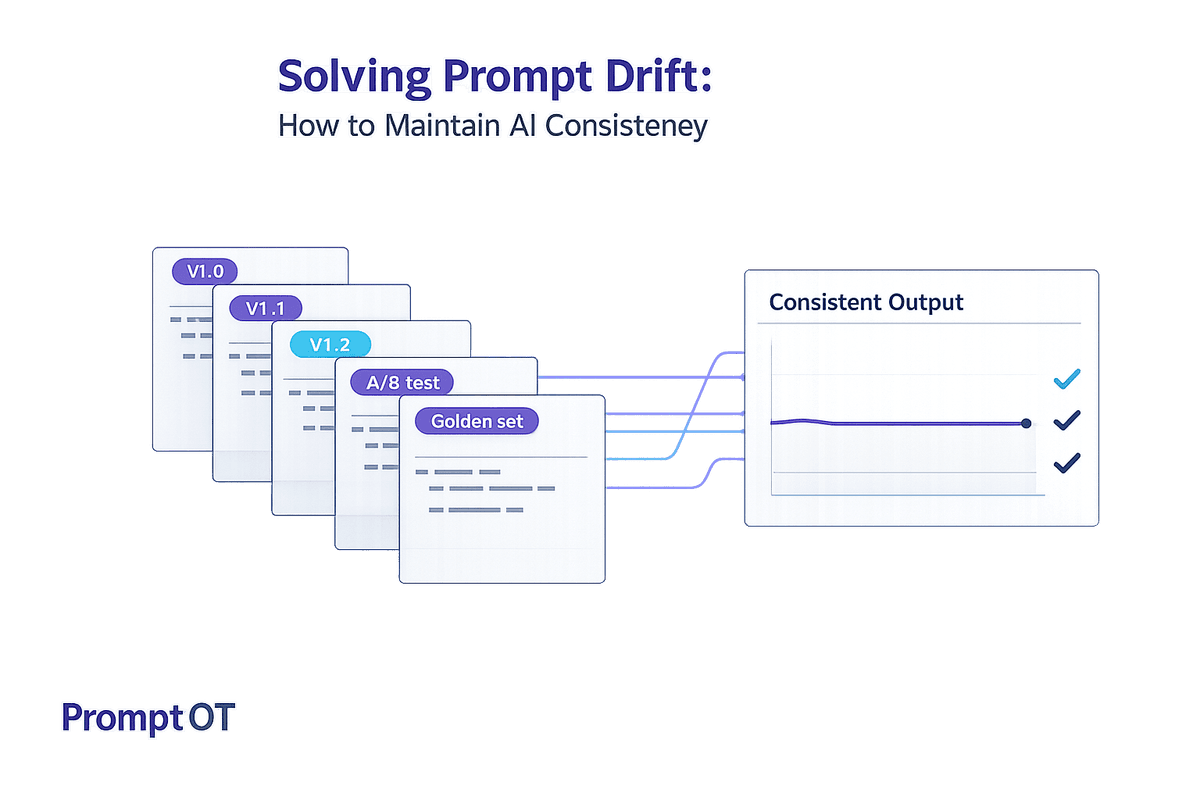

Learn how to detect, prevent, and monitor prompt drift with versioning, golden datasets, A/B tests, and centralized prompt management for reliable LLM outputs.

Read more